Breaking Down a Breakthrough

A Template

If you’re like me and you’re reading about new quantum breakthroughs every day, it can be cumbersome to identify truly solid incremental steps from those that simply sound good.

So I’m going to recommend a template for your review of quantum hardware and software breakthrough claims. It has worked for me when I attempt to separate the hype from the reality.

Image source: Brian Lenahan/Midjourney

Breakdown Of Strengths & Limitations

When I think about a breakthrough, I gauge both its strengths and limitations. Not from the perspective of a physicist. Rather from the perspective of a business user keenly anticipating a new tool for organizations to leverage and benefit from.

I tend to delineate a new breakthrough from the following lenses:

Scalability & Resource Overhead

(How does it scale with problem size? Logical-to-physical qubit ratio, total physical resources projected for utility problems.)Error Correction & Fault-Tolerance Capability

(Threshold crossed? Logical error rate vs. physical? Active/real-time QEC? Break-even for algorithmic circuits? Distance scaling demonstrated?)Performance vs. Classical

(Speedup/quality vs. best classical solvers? Provable advantage? Runtime realism including decoding latency?)Cost & Practicality

(Estimated infrastructure/energy cost? Manufacturing feasibility? Cryo/operational demands?)Architecture Portability & Generality

(Tied to one platform? Portable gates/codes? Connectivity advantages/drawbacks?)Roadmap & Milestone Alignment

(Fits published company/gov roadmaps? Advances key hurdles like universal FT gates or modular scaling?)Nature of Advance

(Incremental (e.g., better fidelity on existing setup) or revolutionary (e.g., new paradigm, threshold crossing, first end-to-end FT algorithm)?)

Example

Let’s take one recent example from two significant quantum industry players, JP Morgan Chase and Quantinuum. In this instance, without hype, the collaboration between JPMorgan Chase’s Global Technology Applied Research team and Quantinuum developed a legitimate and impressive advancement in demonstrating end-to-end fault-tolerant (FT) execution of non-trivial quantum algorithms using only fault-tolerant gadgets on real hardware. A first. The arXiv preprint is titled “Fault-tolerant execution of error-corrected quantum algorithms” (arXiv:2603.04584v1, dated March 4, 2026).

Now let’s use the template of factors to analyse the strengths and limitations of this new development.

Roadmap Alignment

Image source: Quantinuum

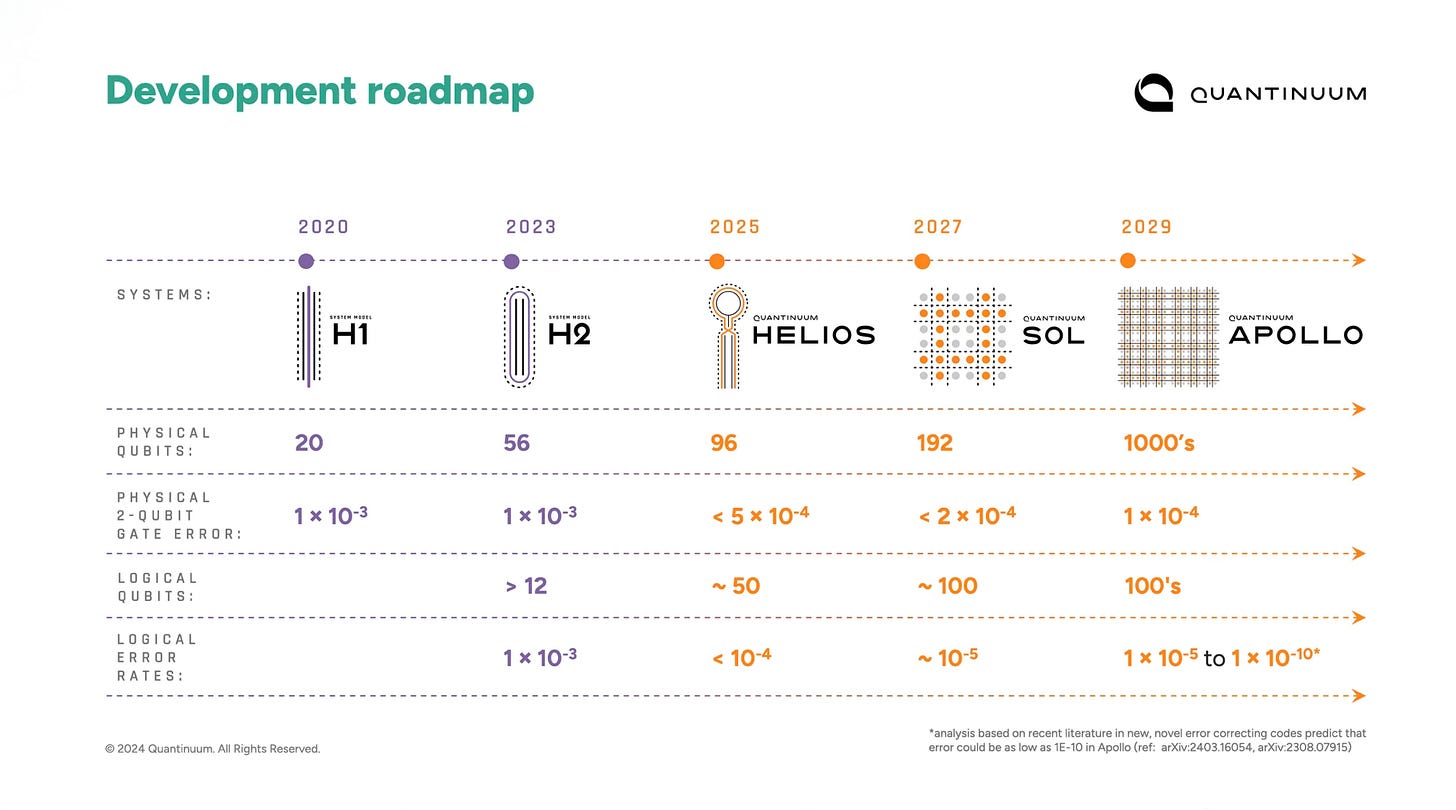

The original Quantinuum public roadmap was announced on September 10, 2024, targeting universal, fully fault-tolerant quantum computing by 2030 (with the flagship fifth-generation system Apollo slated for launch in 2029). It outlined a progression from the H-series (e.g., H2) through subsequent generations like Helios (2025), Sol (2027), and Apollo (2029), focusing on scaling physical qubits, logical qubits, error correction, and integration with tools like NVIDIA CUDA-Q. In June 2025, Quantinuum highlighted milestones (e.g., logical qubit extraction from 98 physical qubits and “derisking” the path to Apollo by 2029) as aligning with and reinforcing the roadmap. The Helios system launched in November 2025 (with 98 physical qubits, exceeding initial plans), explicitly “on schedule” with the roadmap. A notable update appears in a November 5, 2025, revision to their technical perspective/blog, which includes the latest hardware status (e.g., Helios at 98 qubits) and reaffirms the path to Apollo and beyond. In November 2025 DARPA-related announcements, Quantinuum added Lumos as an extension for larger systems into the 2030s, building on the core 2024 framework. Check.

Strengths and Positive Aspects

The recent collaboration executes two algorithms fully fault-tolerantly with error correction, namely Quantum Approximate Optimization Algorithm (QAOA) on up to 12 logical qubits (97 physical qubits, 2132 physical two-qubit gates) for problems like low autocorrelation binary sequences (LABS) and portfolio optimization; and Harrow-Hassidim-Lloyd (HHL) algorithm for solving a Poisson equation instance.

Uses the Steane code ([[7,1,3]]), a small but well-understood code, on Quantinuum’s H2 (H2-1, H2-2) and Helios trapped-ion processors (QCCD architecture).

Achieves a strong logical T gate implementation with infidelity ≈ 2.6(4) × 10⁻³, outperforming prior hardware results.

Demonstrates performance scaling: Increasing QAOA layers and T-gate approximations improves results despite added complexity; up to 8 logical qubits with 9 T gates performs comparably to unencoded runs.

Shows benefits of active quantum error correction (QEC) cycles (e.g., n_QEC = 2 improves fidelity, though n_QEC = 4 adds more noise than benefit) and higher repeat-until-success (RUS) limits for state preparation (reducing failures nearly to zero, with post-selection mitigating fidelity costs).

Operates near break-even for complex algorithmic circuits (logical performance approaching or rivaling physical in some regimes, with dozens of physical qubits and hundreds of gates).

Leverages dynamic circuits with mid-circuit measurement and feedback, a capability Quantinuum excels at.

This pushes beyond prior work (mostly component-level or partial FT demos) toward integrated FT application execution. It’s a credible step toward scalable FT quantum computing, especially on ion-trap hardware, and highlights effective industry-academia collaboration.

Critiques and Limitations

While groundbreaking experimentally, the paper has several important limitations typical of noisy intermediate-scale quantum (NISQ)-era FT demonstrations:

Not yet below break-even for full algorithms — The system operates “near break-even,” but logical circuits do not consistently outperform unencoded physical ones across all metrics/circuits. Additional QEC cycles sometimes degrade performance (e.g., n_QEC = 4 introduces excess errors), indicating the overhead of FT primitives still exceeds error suppression in deeper circuits.

Steane code limitations — The [[7,1,3]] code has distance 3, offering modest protection. Logical error rates remain higher than ideal for large-scale utility. The authors do note future needs for dynamical decoupling and advanced decoding to suppress errors further.

Scale remains modest — Largest QAOA uses 12 logical qubits and ~2000 physical gates — impressive for FT, but far from utility-scale problems (e.g., thousands of logical qubits, millions of gates). HHL is on a “structured toy problem,” not a challenging real-world instance.

Reliance on post-selection and filtering — For RUS and error-detected shots, results often use post-selection or filtering (discarding bad runs), which incurs overhead and isn’t fully fault-tolerant in a scalable sense (true FT aims to avoid heavy post-selection via better codes/schemes).

Hardware-specific — Results are tied to Quantinuum’s high-fidelity trapped ions with all-to-all connectivity and dynamic features. Porting to other architectures (e.g., superconducting) would face different challenges (e.g., lower connectivity, slower gates).

No quantum advantage claimed — No evidence of speedup over classical solvers for the problems run (QAOA/HHL instances are small/toy). The focus is hardware milestone, not algorithmic superiority.

Potential optimism in interpretation — Claims of “near-break-even performance of complex, error-corrected algorithmic quantum circuits” are fair but qualified — it’s near for some shallow circuits, but noise accumulation limits deeper ones. The field still lacks consensus on exact “break-even” definitions for full algorithms.

Summary

The JP Morgan Chase/Quantinuum effort is a high-quality, rigorously executed demonstration that meaningfully advances the field by integrating the full FT stack (encoding, universal gates, QEC cycles, dynamic feedback) for algorithm execution. It’s not a revolution (no fault-tolerant quantum supremacy or utility yet), but a strong incremental step — one of the most complete FT algorithm runs on hardware to date. It sets a new benchmark for what near-term devices can achieve with careful co-design between hardware (Quantinuum) and applications/algorithms (JPMorgan Chase). Future work building on this (e.g., better codes like color codes or surface codes, higher distances, or larger systems) will be exciting to watch.

So using the template helps me understand the nature of a new advance in context and quickly. Whether for my consulting clients, my writing or my public speaking events, the template offers a speed-up in understanding current events in the quantum world.

Brian Lenahan is founder and chair of the Quantum Strategy Institute, author of seven Amazon published books on quantum technologies and artificial intelligence and a Substack Top 50 Rising in Technology. Brian’s focus on the practical side of technology ensures you will get the guidance and inspiration you need to gain value from quantum now and into the future. Brian does not purport to be an expert in each field or subfield for which he provides science communication.

Brian’s books are available on Amazon. Quantum Strategy for Business course is available on the QURECA platform.

Copyright © 2026 Aquitaine Innovation Advisors